And smarter people are better at playing cooperate in prisoners' dilemma games. I'd had this hanging in my to-read file from a Marginal Revolution assorted-links post for a while and had missed the original paper when it came out. But here's the write-up from Pseudoerasmus:

The post also extols the merits of patience.Intelligence and CooperationIn the workhorse model of (non)cooperation — the prisoner’s dilemma — two players are faced with the decision to cooperate or defect based on a matrix of 4 possible payoff combinations.Suppose a sedentary peasant would end up with $4 (the loot + his own output), if he ambushed and robbed a passing horse-backed nomad, but only $3 if he traded goods with him. The nomad faces the identical decision: $4 with robbing, $3 with trading. If bothdecided to rob, then they would be left with $2 each.It’s set up so that each has a perfectly rational self-interest in robbing the other, but the trading world is clearly better than both-turn-to-robbery world.

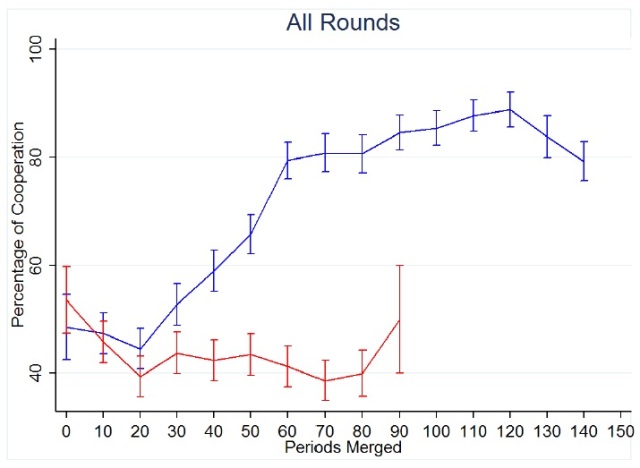

It’s well known from simulations of “infinitely repeated” prisoner’s dilemma games that cooperation is best sustained when both players adopt some variety of conditional cooperation strategy: cooperate first, but then copy the other play’s earlier choice. [Axelrod] The gains to both parties are bigger in the long run if both parties behave like that. And, as we have already seen, real people do in practise show an instinct for conditional cooperation.But Proto, Rustichini & Sofianos (2014) demonstrates that in a multi-round Prisoners’ Dilemma experiment with actual human participants and real money, the intelligent are much more likely to practise conditional cooperation.In this experiment, subjects were first administered Raven’s Progressive Matrices, a test which measures fluid intelligence (i.e., not based on knowledge). They were also tested for risk attitudes and the Big Five personality traits. In the end, 130 participants were allocated to two groups — “high Raven” and “low Raven” — and the only statistically significant difference between the two groups was in fluid intelligence. The participants did not know how they were grouped. (Edit: Also relevant: “Participants in these non-economists sessions had not taken any game theory modules or classes.”)Then within each group, different pairs of participants repeatedly played the prisoner’s dilemma — the maximum number of rounds was 10 but a computer decided whether to terminate the session after each round with a fixed probability. There were multiple sessions of these rounds of games.[Blue: high Ravens group, Red: low Ravens group. X-axis: each period represents 10 rounds; Y-axis: the fraction of players cooperating.]The high Raven group not only diverged early from the low Ravens, but also sustained cooperation much longer. There actually wasn’t much difference between the two groups in the early rounds. The difference grew incrementally, in drips and drabs, but in the end, it was substantial. This suggests high Ravens learnt the optimal behaviour from the previous rounds better than the other group.Proto et al. sliced and diced the data in various ways and found that:

- reciprocation is much stronger with the smart: high Ravens are more likely than the low Ravens, to match prior cooperation with cooperation in kind, and punish prior defection with defection in kind;

- reaction times — the time it took to decide whether to cooperate or defect — were shorter and declined faster for the high Ravens;

- the only statistically significant difference in individual participant characteristics was fluid intelligence;

- when the monetary payoffs were manipulated to make cooperation less profitable in the long run, the high Ravens were no more cooperative than the low Ravens — if anything, the low Ravens were slightly more cooperative !

But the most amazing result has to be this: “Low Raven subjects play Always Defect with probability above 50 per cent, in stark contrast with high Raven subjects who play this strategy with probability statistically equal to 0. Instead, the probability for the high Raven to play more cooperative strategies (Grim and Tit for Tat) is about 67 per cent, while for the low Raven this is lower (around 45 per cent).”Understanding the benefits of working together in complex situations — which is what a repeated prisoner’s dilemma simulates — implicitly requires reasoning skills, the ability to learn from mistakes, the ability to anticipate, and accurate beliefs about other people’s motives.The ethical implication: the intelligent are more likely to practise the Golden Rule, and this actually breeds trust; and the less intelligent are more likely to think they can get away with it, and this breeds mistrust. You only need intelligence to generate this difference. You can immediately see where social and civic capital might come from, at least in part.The Proto et al. study replicates and extends a few earlier studies on intelligence and cooperation (Al-Ubaydli et al. 2014; Jones 2008). Moreover, it’s consistent with cross-cultural findings from Public Goods Games as described below.

I'm looking forward to reading Hive Minds when it is published next week, on the implications for countries of collective intelligence as I understand the blurb

ReplyDelete